Back in 2018 I started working with robots to paint with Rob D Naja / 3D (Massive Attack). The initial project was just under a year, which involved setting up two systems, one with paint brushes and one with spray cans. I developed the control software for the robots, network interface, front end apps and tooling to hold the paints & cans. There was a lot of exploration into how to make the robot paint like a human, always with the idea of GANs in our mind. Generative Adversarial Networks were really popular back in 2018 as generative ai was really starting to create tangible outputs. GANs are great at creating raster images, but how do you take a pixel matrix and translate it to the motions of a brush to create physical artwork that is comparable to that of a human made artwork?

Considering the time constraints of that project, I chose to manage risk and at first take a more pragmatic approach an lean on my experience in machine vision to deconstruct digital images into regions of colour, and then after some shape analysis, determine to to outline and fill those shapes. It worked reasonably well, but really I always wanted time to explore an AI approach to teach the robot how to paint.

Expl0ring New AI APproaches to Robot Motions

Early 2025 I submitted an application with and old colleague Prof Mark Hansen from UWE/BRL’s machine vision group to UWE’s new Bridge Studios seed projects. The studio resides on the edge of Bristol Robotics Laboratory in place of what was RIF/SABRE – an industry outreach program to welcome companies into the world of robotics. As that completed UWE have now opened up a creative technology hub. During my time in the lab I found myself more and more engrossed in creative technology, so this is a great move forwards for UWE. Although, I secretly wished it happened when I was working there.

Our application was to explore making robots paint and we had our minds set on the huge Kuka arm they have there. Unfortunately due to staffing challenges we couldn’t get access to the Kuka so instead we have to work with the universal robots they had. I wrote briefly about setting up the UR arm previously. I had a few challenges getting ROS setup and working, but eventually got the robot moving mostly as I wanted. I think as always, a bit more time to tweak the motion parameters and I would be happy, but this really was a fail fast R&D project so getting caught up on fine details isnt the objective.

The pipeline of the idea was to create an image in VR, and pass that through a sequence of AI steps to eventually output robot motion. The first stage was to analyse the image using a LLM to describe what it thinks the image is. It then autogenerates a prompt to recreate the image along with some random extra features such as style and mood. This then outputs a much better image, rather than a janky VR draw image. You can see below an input image and output image.

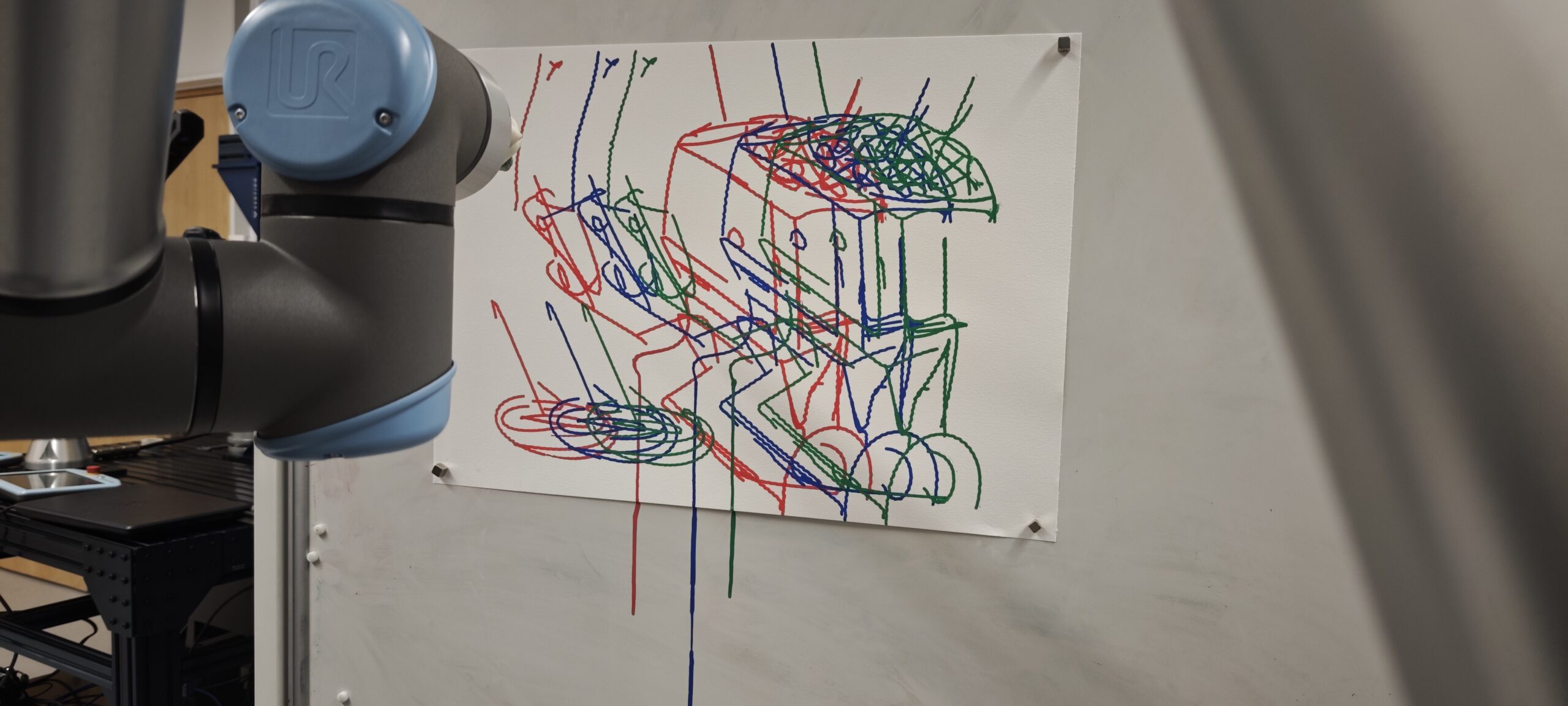

With this improved image we then have to convert it to robot motion paths. Here we leverage openAI’s clip model to generate lines that make up the image which can be converted to vectors. These vectors are then easily converted to robot motion.

The video below quickly shows the pipeline from VR to robot motion. At this stage we are just using pens, and one colour to prove it works. However, it wouldnt take much more work to create layers, colour changes and use paint brushed. Something my new robot is just about ready to do.

But Why?

Whilst playing with robots and using them as a tool to create art is fun, it does have some real world applications. Firstly, it can make creativity accessible to those with mobility challenges. If you can move your hands or feet, the ideas of using a paint brush seems impossible, but collaborating with a robot makes that achievable.

That idea of collaborating is also interesting. If you can have a robot studio companion whilst you create, you can run through ideas quicker. You can create at different scales and you can introduce both repeatability and intentional noise in that repetition. In some ways, these are all achievable with more time, money and extra people, but those arent always accessible, and finding the tool that works for you is part of the creative practice.

Leave a Reply